Opening Narrative

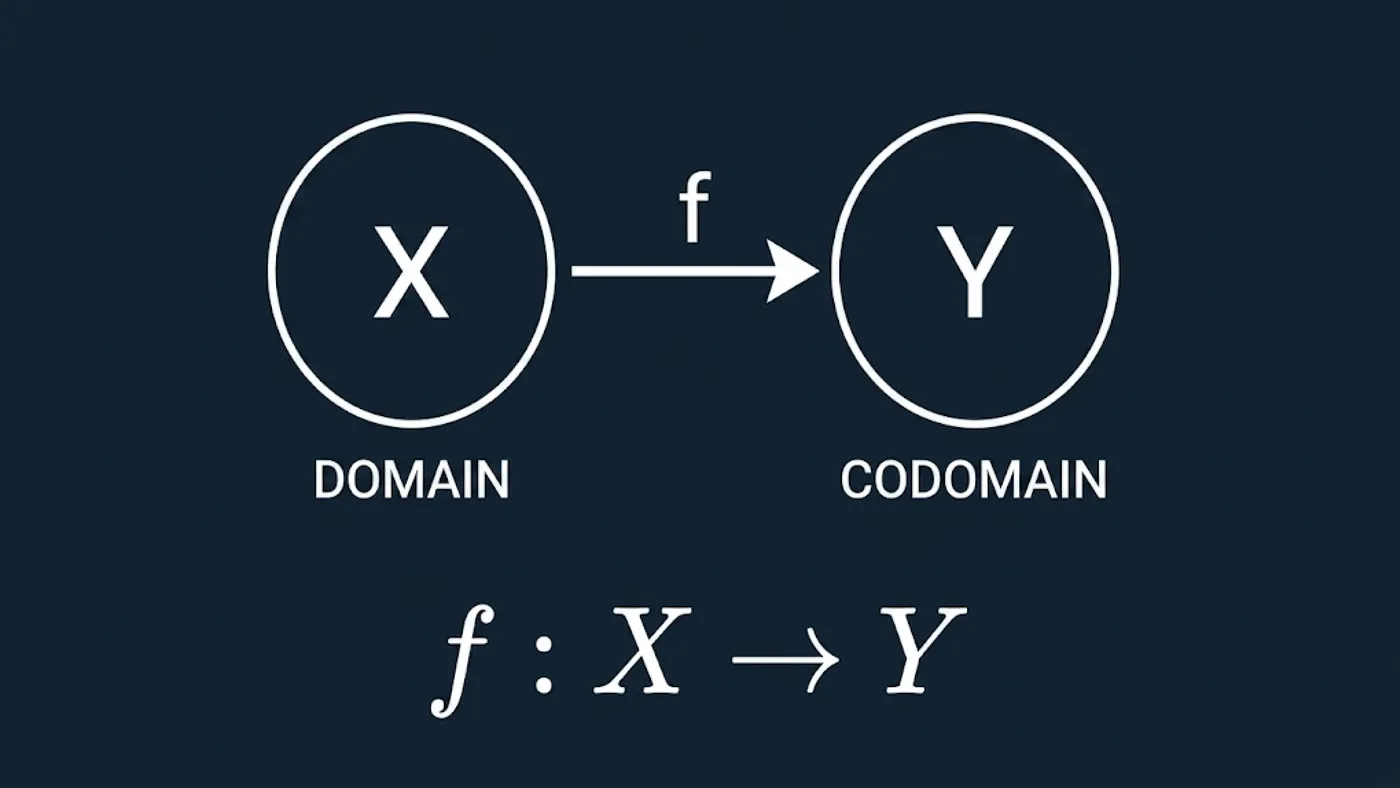

Function notation is the compact language used to describe how an AI component transforms inputs into outputs. Instead of repeatedly saying “the model takes this kind of data and returns that kind of prediction,” we express the same contract as . This notation is short, precise, and stable across many model families.

The practical benefit is not elegance, it is reliability. When teams clarify what belongs to (valid input space) and what belongs to (output space), they catch data-interface bugs earlier, document assumptions clearly, and reduce deployment surprises. This makes function notation one of the highest-leverage foundations for reading and building AI systems.

Core Learnings

- A model mapping is written as , where is the domain and is the codomain.

- A concrete prediction is written as for one specific input .

- Deterministic mappings produce a single output per input, while probabilistic mappings produce output distributions.

- Most production failures around model APIs are domain/codomain contract failures, not optimization failures.

- Parameterized models are naturally expressed as , where are learned parameters.

Function Notation as an AI Mapping Language

When we write , we are defining a transformation contract. The symbol names the mapping rule, not necessarily the architecture. A rule engine, decision tree, and neural network can all be represented with the same shape if they map inputs from into outputs in .

For one input instance , the output is . In machine learning, we often parameterize the mapping as to indicate that behavior depends on trainable parameters . During training, changes while the contract stays fixed.

This notation gives a common reading frame for technical docs, papers, and code. It also helps compare systems with different internal mechanisms but equivalent input/output interfaces.

Domain and Codomain in Real Systems

The domain is the set of valid inputs. In practice, it is constrained by schema shape, units, encoding, and preprocessing assumptions. If one system expects Celsius and another expects Fahrenheit, they do not share the same domain even if both process “temperature.”

The codomain is the declared output space. Examples include label sets, score ranges, vectors, or probability distributions. If a downstream service expects calibrated probabilities but receives raw logits, that is a codomain mismatch.

Many integration failures come from silent changes to or . Making explicit in docs and tests turns those assumptions into verifiable contracts.

Deterministic vs Probabilistic Mappings

Deterministic mappings return one stable output for the same input under fixed parameters. Probabilistic mappings return uncertainty-aware outputs, often distributions such as .

Both still fit the same function frame. Deterministic systems map into concrete decision spaces, while probabilistic systems map into distribution spaces. The notation does not force one modeling philosophy; it provides a shared interface language.

Example Walkthrough

- Define an input schema and call it . Observe required fields, units, and allowed value ranges. Why: this sets explicit domain boundaries.

- Implement a deterministic baseline . Observe identical outputs for repeated identical inputs. Why: this confirms deterministic behavior.

- Swap to a probabilistic model where . Observe confidence-valued outputs instead of hard labels. Why: codomain semantics changed.

- Send invalid input . Observe validation failure or coercion warnings. Why: domain checks protect model reliability.

- Retrain parameters from to . Observe that and may differ while the contract shape remains identical. Why: interface stability and model behavior can evolve independently.

- Apply a decision rule on top of scores (for example classify positive if ). Observe how policy thresholds transform codomain values into actions. Why: deployment logic depends directly on output interpretation.

Related topics

Keep this section updated whenever new pages link to this deep dive or when this page adds new outbound links.

Links to this page (incoming)

| Linked page | Relation note |

|---|---|

| AI Starter Course: What is AI? | Uses function notation as the first formal frame for model mappings. |

| AI Starter Course: Goal trees | Reuses domain/function language for state-transition structure. |

| AI Starter Course: Expert systems | Applies notation to symbolic rule evaluation and confidence logic. |

| AI Starter Course: Uninformed search | References notation for evaluation and state expansion definitions. |

| AI Starter Course: Heuristic search | Uses -style scoring notation for search guidance. |

| AI Starter Course: Local search | Uses objective-function notation over candidate states. |

| AI Starter Course: Constraint satisfaction | Uses set and subscript notation for variable-domain formulations. |

| AI Starter Course: Planning (STRIPS) | Uses formal predicate/action representations mapped with function syntax. |

| AI Starter Course: Intro to machine learning | Introduces hypothesis mappings and model families via function form. |

| AI Starter Course: Decision trees | Represents split-based prediction as input-output mapping. |

| AI Starter Course: Neural networks | Uses layered mappings and activation functions with consistent notation. |

| AI Starter Course: Backpropagation | References parameterized mappings like . |

| AI Starter Course: CNNs | Uses function composition notation across convolution blocks. |

| AI Starter Course: Knowledge representations | Connects symbolic structures to formal mapping semantics. |

| AI Starter Course: Transformers | Uses query-key-value transformations in mapping notation. |

| Big-O growth intuition for search | Links notation setup before complexity expressions are introduced. |

| Certainty factors in expert systems | Links notation setup before confidence-combination formulas. |

Links from this page (outgoing)

| Linked topic | Relation note |

|---|---|

| Big-O growth intuition for search | Applies the same notation discipline to algorithm growth analysis. |

| Certainty factors in expert systems | Reuses function/variable notation in uncertainty-aware rule systems. |

Reference Table

| Symbol or Term | Quick Meaning | Detailed Link |

|---|---|---|

| Mapping function implemented by the AI component. | Function Notation as an AI Mapping Language | |

| Domain: set of valid inputs. | Domain and Codomain in Real Systems | |

| Codomain: declared output space. | Domain and Codomain in Real Systems | |

| One concrete input instance with . | Function Notation as an AI Mapping Language | |

| Output of evaluated at input . | Function Notation as an AI Mapping Language | |

| Parameterized mapping with learnable parameter set . | Example Walkthrough | |

| Deterministic mapping | Same input gives same output under fixed parameters. | Deterministic vs Probabilistic Mappings |

| Probabilistic mapping | Output is a distribution such as . | Deterministic vs Probabilistic Mappings |

| Input schema | Operational data contract that defines practical domain validity. | Domain and Codomain in Real Systems |