Opening Narrative

Certainty factors (CFs) are a practical uncertainty formalism used in many rule-based expert systems. They were designed for settings where experts can express relative confidence in rules, but full probabilistic models are expensive or unavailable.

The core idea is simple: keep symbolic interpretability from rules, then attach explicit confidence strengths to intermediate and final conclusions. This creates a traceable reasoning process while acknowledging uncertain evidence.

Core Learnings

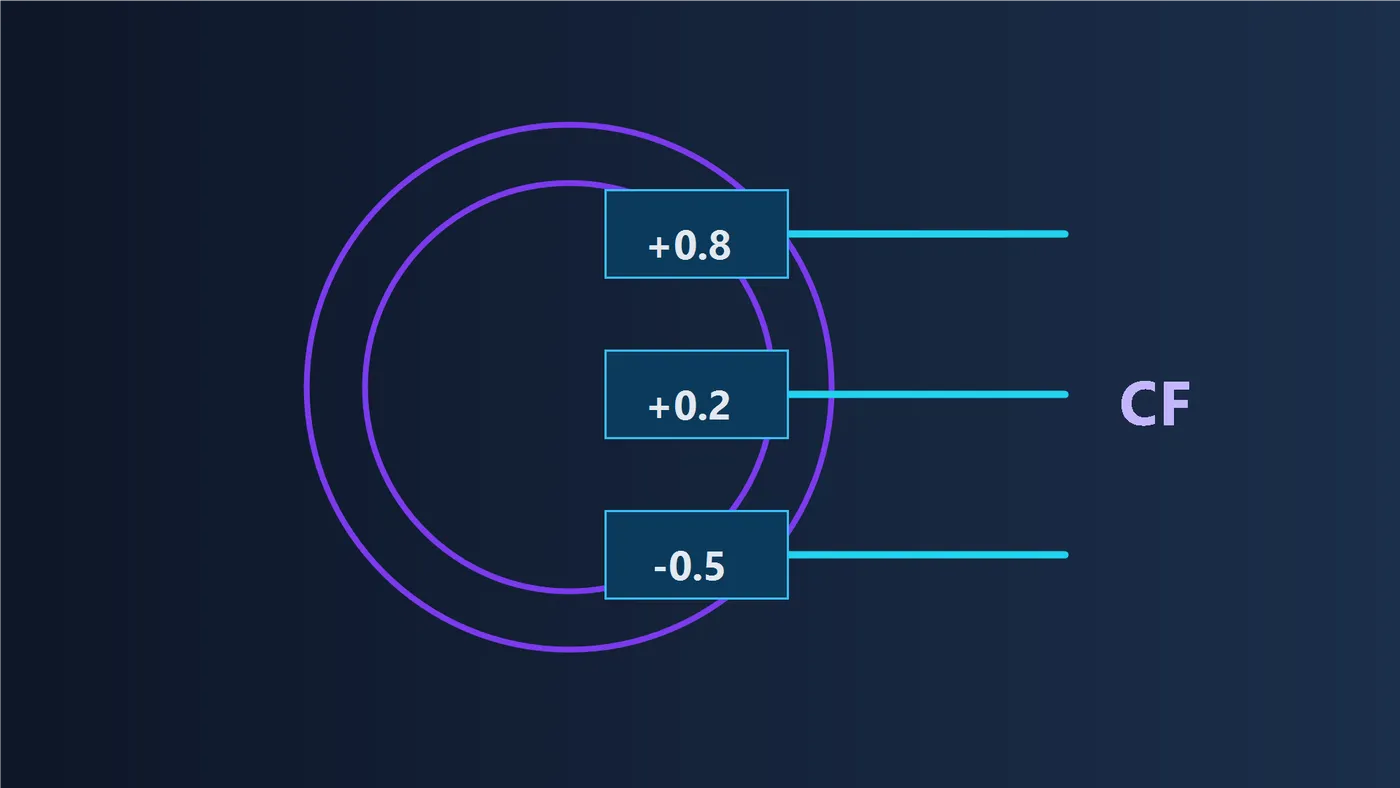

- A certainty factor is a bounded confidence score, commonly expressed on a scale such as .

- Positive CF values support a hypothesis, negative values oppose it, and values near are weak.

- CF systems require explicit combination logic for supportive and conflicting evidence.

- CF values are not probabilities and should not be interpreted as calibrated likelihoods by default.

- CF reasoning remains useful where interpretability and rule-level traceability are required.

What a Certainty Factor Represents

A certainty factor quantifies the strength of belief update contributed by evidence. In common conventions, indicates support, indicates contradiction, and indicates limited impact.

Because CF is a confidence-style quantity, it is best interpreted comparatively (stronger vs weaker support), not as a direct probability claim. A statement such as means “strong support under this rule system,” not automatically ” chance.”

How Confidence Propagates Through Rule Chains

Rule-based systems often infer conclusions in multiple hops. An early finding can trigger an intermediate hypothesis, which then contributes to a higher-level recommendation. CF propagation keeps these transitions explicit.

A typical pattern is attenuation: if upstream evidence is uncertain and the rule itself is only moderately reliable, resulting downstream confidence should be capped accordingly. Supportive contributions are combined to increase confidence, while contradictory contributions reduce net confidence.

The exact algebra depends on system design, but the operational objective is consistent: preserve explanation quality while preventing overconfident conclusions from fragile evidence.

Certainty Factors vs Probabilities

Probability models aim for coherence under probabilistic axioms and support inference over full event structures. CF systems prioritize practical expert knowledge capture and transparent rule traces.

Both have valid use cases. CF approaches are often effective in interpretable rule-driven environments. Probabilistic approaches are preferred when calibrated risk estimates and formally consistent uncertainty updates are required.

A robust practice is semantic discipline: keep CF language as confidence strength unless explicit calibration work has mapped CF outputs to probabilistic interpretations.

Example Walkthrough

- Define one rule with confidence for a hypothesis. Observe moderate support, not certainty. Why: one rule should not dominate the conclusion.

- Add a second supportive rule with . Observe increased confidence with diminishing returns. Why: multi-evidence support should strengthen but not explode.

- Add one conflicting rule with . Observe net confidence reduction. Why: contradiction must explicitly affect decisions.

- Lower reliability of one upstream measurement. Observe reduced downstream CF after propagation. Why: uncertainty should attenuate through chains.

- Compare the final CF output with a separate probabilistic model output on the same case. Observe similar numbers can still have different semantics. Why: value equality does not imply interpretation equality.

- Review the explanation trace of contributing rules and strengths. Observe which evidence dominated the final conclusion. Why: trust depends on inspectable reasoning paths.

Related topics

Keep this section updated whenever new pages link to this deep dive or when this page adds new outbound links.

Links to this page (incoming)

| Linked page | Relation note |

|---|---|

| AI Starter Course: Expert systems | Uses CF notation and rule-confidence concepts directly in symbolic clinical reasoning. |

| Function notation for AI | Provides base variable/function notation used in CF formula expressions. |

| Big-O growth intuition for search | Connects uncertainty-rule systems with scaling and evaluation-cost concerns. |

Links from this page (outgoing)

| Linked topic | Relation note |

|---|---|

| Function notation for AI | Supplies notation foundations for CF symbols and rule expressions. |

| Big-O growth intuition for search | Complements CF logic with computational scaling intuition. |

| AI Starter Course: Expert systems | Shows a concrete applied setting where CF-style reasoning is used. |

Reference Table

| Symbol or Term | Quick Meaning | Detailed Link |

|---|---|---|

| Certainty factor score for support/opposition strength. | What a Certainty Factor Represents | |

| Evidence supports the hypothesis. | What a Certainty Factor Represents | |

| Evidence opposes the hypothesis. | What a Certainty Factor Represents | |

| Evidence has weak or ambiguous effect. | What a Certainty Factor Represents | |

| Rule strength | Confidence attached to a rule implication. | How Confidence Propagates Through Rule Chains |

| Propagation | Passing confidence through multi-step inference chains. | How Confidence Propagates Through Rule Chains |

| Support combination | Operator for merging multiple supportive contributions. | How Confidence Propagates Through Rule Chains |

| Conflict combination | Operator for reconciling contradictory contributions. | How Confidence Propagates Through Rule Chains |

| Calibration gap | Mismatch between CF semantics and calibrated probabilities. | Certainty Factors vs Probabilities |