Opening Narrative

Big-O notation is a language for growth behavior. It answers the question: as a problem gets larger, how does required computation or memory scale? This is different from asking how fast one implementation runs on one machine.

For AI search and planning tasks, growth behavior determines feasibility. A method that looks fine on toy examples may become unusable under larger branching, deeper goals, or tighter latency constraints. Reading asymptotic expressions early helps teams choose methods that remain viable in production.

Core Learnings

- Big-O summarizes asymptotic growth, not exact runtime.

- Search workloads are often governed by branching factor and depth .

- Expressions like signal combinatorial growth and potential infeasibility.

- Complexity classes guide algorithm selection before implementation effort is spent.

- Constant-factor optimization helps, but growth-class changes usually dominate at scale.

Big-O as Growth Language, Not Stopwatch Timing

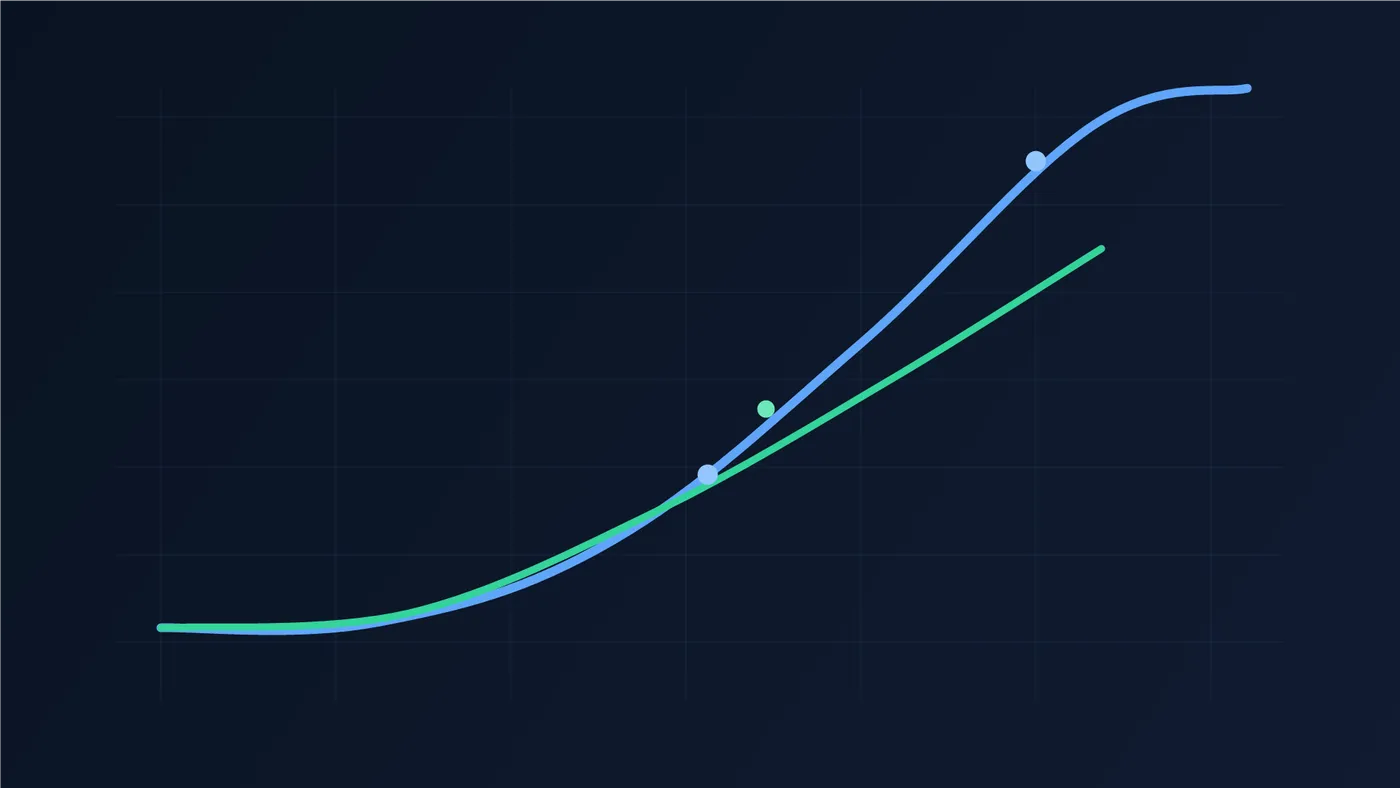

Big-O expresses the upper-order growth term of a cost function. If one algorithm scales as and another as , the second eventually dominates as grows, even if benchmark constants initially hide the difference.

This abstraction is useful because production systems evolve. Input size, model complexity, and query volume increase over time. Without growth analysis, performance planning becomes reactive and expensive.

Why Search Explodes: Branching Factor and Depth

In many search problems, total explored states are dominated by the largest depth layer. If average branching factor is and solution depth is , a rough expansion profile is:

For moderate or large , this is dominated by , which motivates complexity descriptions like . Small increases in depth can multiply work dramatically.

This same growth pattern often affects memory, not only time. Breadth-heavy strategies can fail from frontier size limits before compute throughput is exhausted.

Interpreting Big-O Without Common Mistakes

Mistake 1: treating Big-O as exact timing. A method in can still be slow with large constants.

Mistake 2: ignoring data regime. If is fixed and tiny, asymptotic differences may not matter operationally.

Mistake 3: mixing worst-case, average-case, and expected-case claims without labeling assumptions.

Mistake 4: chasing constant-factor micro-optimizations when the dominant issue is exponential growth.

Example Walkthrough

- Choose baseline parameters and . Observe approximate frontier growth across levels. Why: builds geometric intuition.

- Increase only depth to . Observe multiplicative expansion, not additive increase. Why: depth can dominate feasibility.

- Keep and reduce branching to via pruning constraints. Observe large state-count reduction. Why: branching control often has highest leverage.

- Compare uninformed search against heuristic-guided search under identical goals. Observe reduced expansions with effective heuristic guidance. Why: informed strategies reduce effective search effort.

- Track both expansions and peak memory usage. Observe where memory limits fail first. Why: space complexity can be the practical bottleneck.

- Introduce a misleading heuristic and re-measure. Observe degraded performance and possible re-expansion costs. Why: heuristic quality assumptions matter for real-world complexity.

Related topics

Keep this section updated whenever new pages link to this deep dive or when this page adds new outbound links.

Links to this page (incoming)

| Linked page | Relation note |

|---|---|

| AI Starter Course: What is AI? | Uses Big-O as first complexity anchor for model scaling discussion. |

| AI Starter Course: Goal trees | Connects tree growth and complexity across depth/branching. |

| AI Starter Course: Expert systems | References complexity trade-offs in symbolic inference systems. |

| AI Starter Course: Uninformed search | Applies directly to BFS/DFS search costs. |

| AI Starter Course: Heuristic search | Uses complexity framing to justify heuristics and admissibility constraints. |

| AI Starter Course: Local search | Relates iteration budgets and landscape exploration costs. |

| AI Starter Course: Constraint satisfaction | Uses complexity intuition for pruning and consistency methods. |

| AI Starter Course: Planning (STRIPS) | Links planning-state expansion to branching-depth growth. |

| AI Starter Course: Intro to machine learning | Uses growth classes to discuss data and model scaling behavior. |

| AI Starter Course: Decision trees | References complexity in split selection and inference path depth. |

| AI Starter Course: Neural networks | Connects complexity intuition to architecture-depth trade-offs. |

| AI Starter Course: Backpropagation | Uses complexity perspective for training-step cost reasoning. |

| AI Starter Course: CNNs | Links tensor operations to scaling and computational budgets. |

| AI Starter Course: Knowledge representations | References representational choices and their search/inference cost. |

| AI Starter Course: Transformers | Uses growth reasoning for sequence-length and attention cost framing. |

| Function notation for AI | Introduces symbolic notation needed to parse complexity expressions. |

| Certainty factors in expert systems | Links complexity thinking for confidence-rule evaluation overhead. |

Links from this page (outgoing)

| Linked topic | Relation note |

|---|---|

| Function notation for AI | Provides notation primer used in Big-O and search formulas. |

| Certainty factors in expert systems | Connects scaling concerns to uncertainty-aware symbolic systems. |

Reference Table

| Symbol or Term | Quick Meaning | Detailed Link |

|---|---|---|

| Big-O | Asymptotic notation for dominant growth trend. | Big-O as Growth Language, Not Stopwatch Timing |

| Generic input-size variable. | Big-O as Growth Language, Not Stopwatch Timing | |

| Average branching factor. | Why Search Explodes: Branching Factor and Depth | |

| Search depth to solution or horizon. | Why Search Explodes: Branching Factor and Depth | |

| Typical growth pattern for broad uninformed search. | Why Search Explodes: Branching Factor and Depth | |

| Time complexity | How operation count scales with input growth. | Big-O as Growth Language, Not Stopwatch Timing |

| Space complexity | How memory usage grows with problem size. | Why Search Explodes: Branching Factor and Depth |

| Worst case | Upper-limit behavior under most difficult valid inputs. | Interpreting Big-O Without Common Mistakes |

| Heuristic quality | How guidance accuracy affects effective search effort. | Example Walkthrough |